AWS's S3 documentation makes multipart uploads sound like a solved problem. It's not. Not if you're expecting a real user on a unreliable hotel Wi-Fi to push a 20GB video file through your app without incident.

Build a Next.js app on the standard AWS SDK or plain presigned URLs, and you'll hit a wall fast. Presigned URLs give you the plumbing for chunked uploads. They do nothing about frontend state.

Picture this: your user's progress bar inches to 80%. Laptop sleeps. Connection drops. Because SDK state lives only in memory, that context is gone. A hard network cut forces a restart from zero. We've watched users attempt the same upload three times in a row. Each retry generates a support ticket, and the frustration compounds. It's not a UX problem. It's an architecture problem.

So what's the workaround? You start manually tracking upload IDs in IndexedDB, juggling arrays of ETags, and writing brittle polling loops just to fetch fresh presigned URLs before they expire on multi-hour transfers. It's a mess, and you know it's a mess while you're writing it.

Why We Switched to TUS

We stopped patching raw multipart upload scripts. We moved to the TUS protocol.

TUS is an open protocol built specifically for resumable uploads. It doesn't just split files and cross its fingers. It creates a negotiated state between client and server. The real kind, not the fake kind you'd get from a half-baked retry loop.

When an upload breaks, the client doesn't guess at the offset. It explicitly asks the server which bytes were safely committed. The server replies with the last confirmed boundary. The client sends only the missing pieces. No wasted bandwidth. No starting over.

What Actually Worked For Us

Most engineers start by rolling their own TUS layer. They spin up a tusd Go binary on EC2 or inside Docker. Don't, unless you genuinely enjoy keeping that infrastructure alive at 2 AM.

Here's what happens: you're suddenly responsible for a local storage backend, orphaned temp file cleanup, and auth state that runs completely separate from your main API. You originally chose direct-to-S3 to sidestep a clunky middleware layer. Now you've built one anyway.

The only approach that holds up is streaming TUS chunks straight into S3 multipart upload parts, with no local disk or intermediate buffer. That's the only way to do a secure S3 multipart upload from a browser at scale without turning your backend into a bottleneck.

Here's how we wire it up in a Next.js frontend using Uppy and Rilavek as a TUS endpoint.

import React, { useEffect } from 'react';

import Uppy from '@uppy/core';

import Tus from '@uppy/tus';

import GoldenRetriever from '@uppy/golden-retriever';

import { Dashboard } from '@uppy/react';

import '@uppy/core/dist/style.min.css';

import '@uppy/dashboard/dist/style.min.css';

export default function ResumableUploader({ pipeId, uploadToken }) {

const uppy = new Uppy({

id: 'video-ingestion',

autoProceed: false,

debug: true,

});

useEffect(() => {

uppy.use(Tus, {

endpoint: `https://upload.rilavek.com/pipes/${pipeId}/files/`,

resume: true,

autoRetry: true,

retryDelays: [0, 1000, 3000, 5000],

headers: {

Authorization: `Bearer ${uploadToken}`,

}

});

uppy.use(GoldenRetriever, { expires: 24 * 60 * 60 * 1000 });

return () => uppy.destroy();

}, [pipeId, uploadToken]);

return <Dashboard uppy={uppy} />;

}

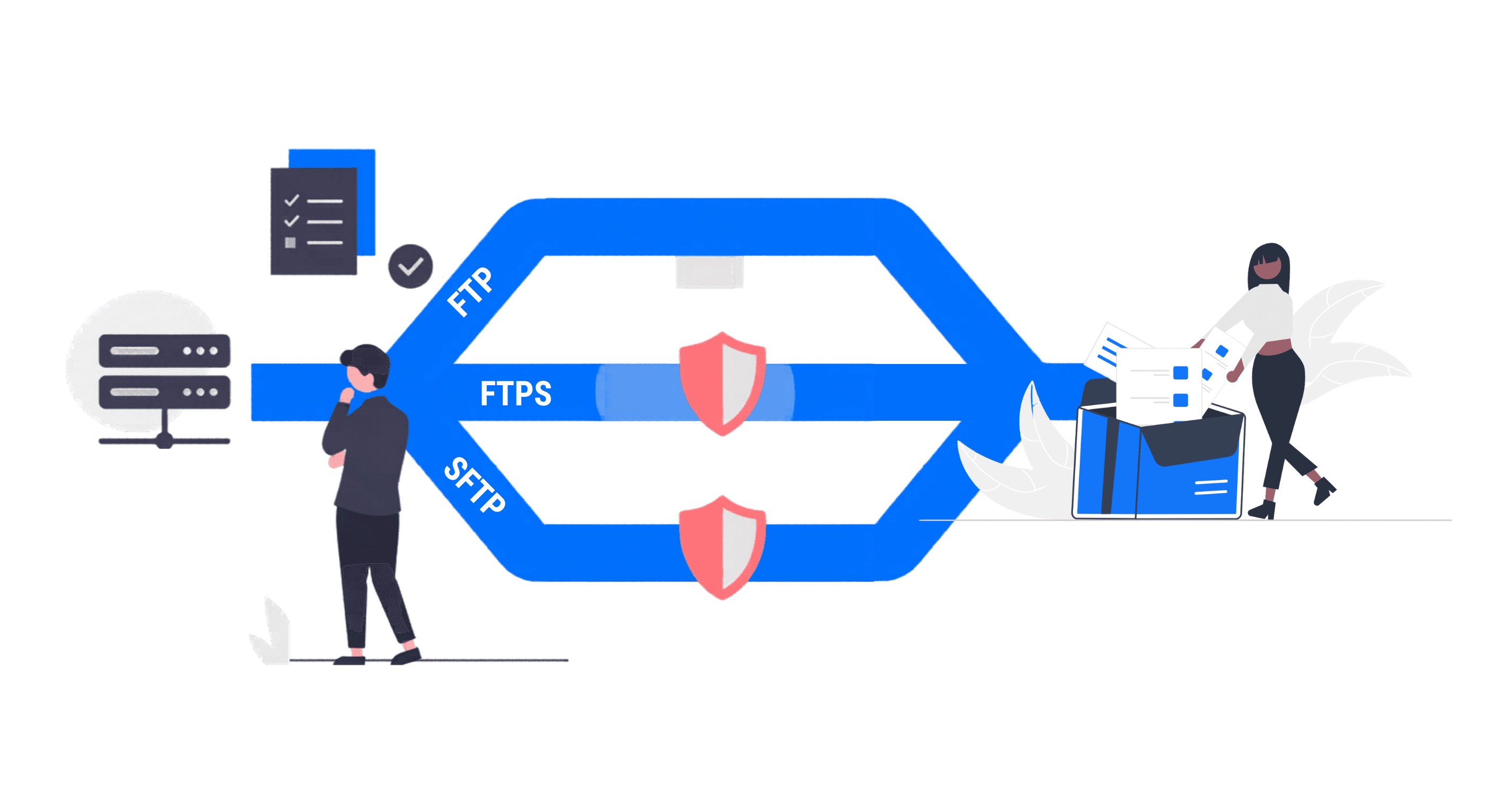

The uploadToken gets passed directly into the component. Never put your root API keys anywhere near a browser environment. Not in environment vars, not hardcoded, not ever.

Your backend requests a short-lived, scoped token using your actual credentials, then hands that temporary string to the client. If someone intercepts the network payload, the damage is contained to a single pipe.

const response = await fetch("https://rilavek.com/api/v1/tokens", {

method: "POST",

headers: {

"Authorization": `Bearer sk_live_YOUR_SECRET_KEY`,

"Content-Type": "application/json"

},

body: JSON.stringify({

pipeId: "pipe_123abc"

})

});

const { token } = await response.json();

The "Micro-Insight" We Learned the Hard Way

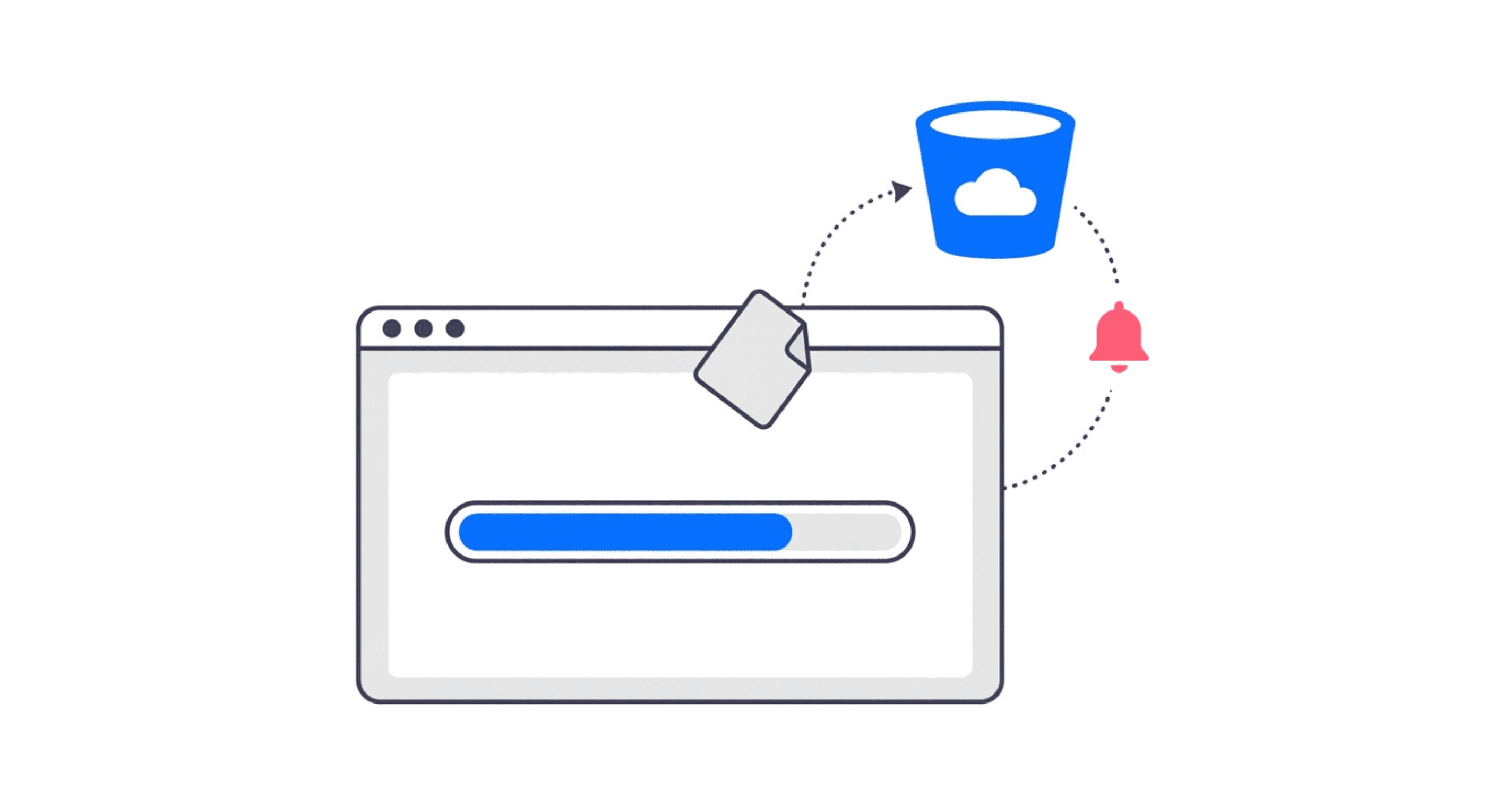

Configuring the TUS plugin isn't enough. If you're using Uppy, you also need GoldenRetriever. This one trips up almost everyone.

Without it, TUS resumes sessions that are still active in memory. Close the tab? Memory is gone. The resume capability you thought you had? Gone with it.

GoldenRetriever persists the upload state into IndexedDB: the same state your TUS client needs to find its place in the stream.

That's the real difference between "resumable" as a marketing claim and resumable as a working guarantee. With it enabled, a user can close their laptop, come back the next morning, and pick up at the exact byte where the transfer died.

Stop Building Ad-Hoc Adapters

Don't sink weeks into debugging ETag mismatches, chunk offset drift, and CORS header archaeology.

Rilavek ships a TUS-compatible HTTP endpoint that streams uploads straight to your S3 bucket with no intermediate storage. Your team ships the feature instead of maintaining the protocol.

Technical Reference & Next Steps

- TUS Endpoint Setup: For the full URL structure and header specifications, see the HTTP (TUS) Source Documentation.

- Authentication Deep Dive: Learn how to securely generate temporary Sender Tokens for your frontend clients.

- Infrastructure: Not using AWS? Rilavek supports any S3-compatible data store, including Cloudflare R2 and Wasabi, using the same TUS interface.

Enjoyed this guide?

Share it with your network to help others scale their data pipelines.