AWS docs make S3 event notifications sound perfectly solved. They aren't. Not even close.

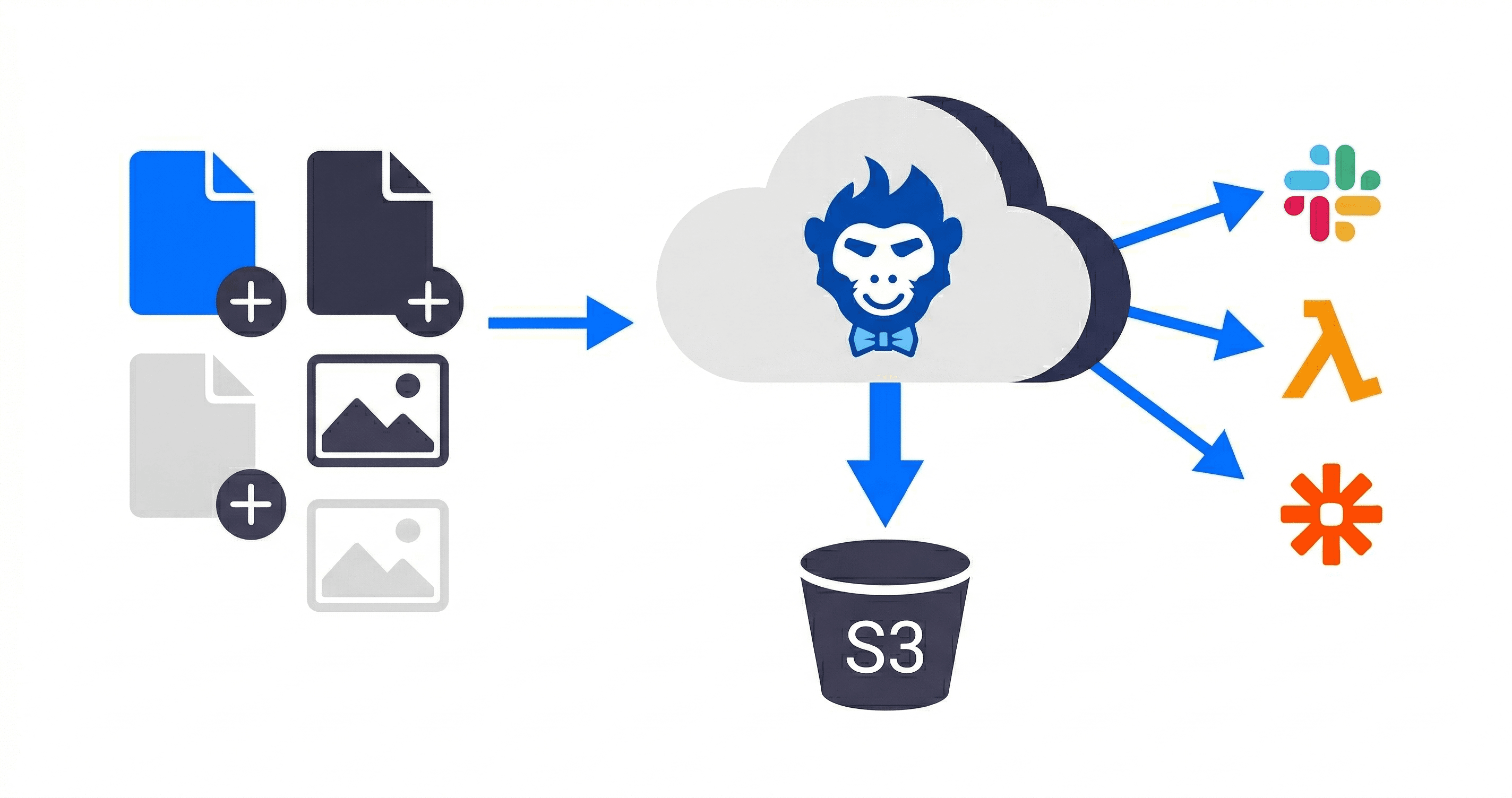

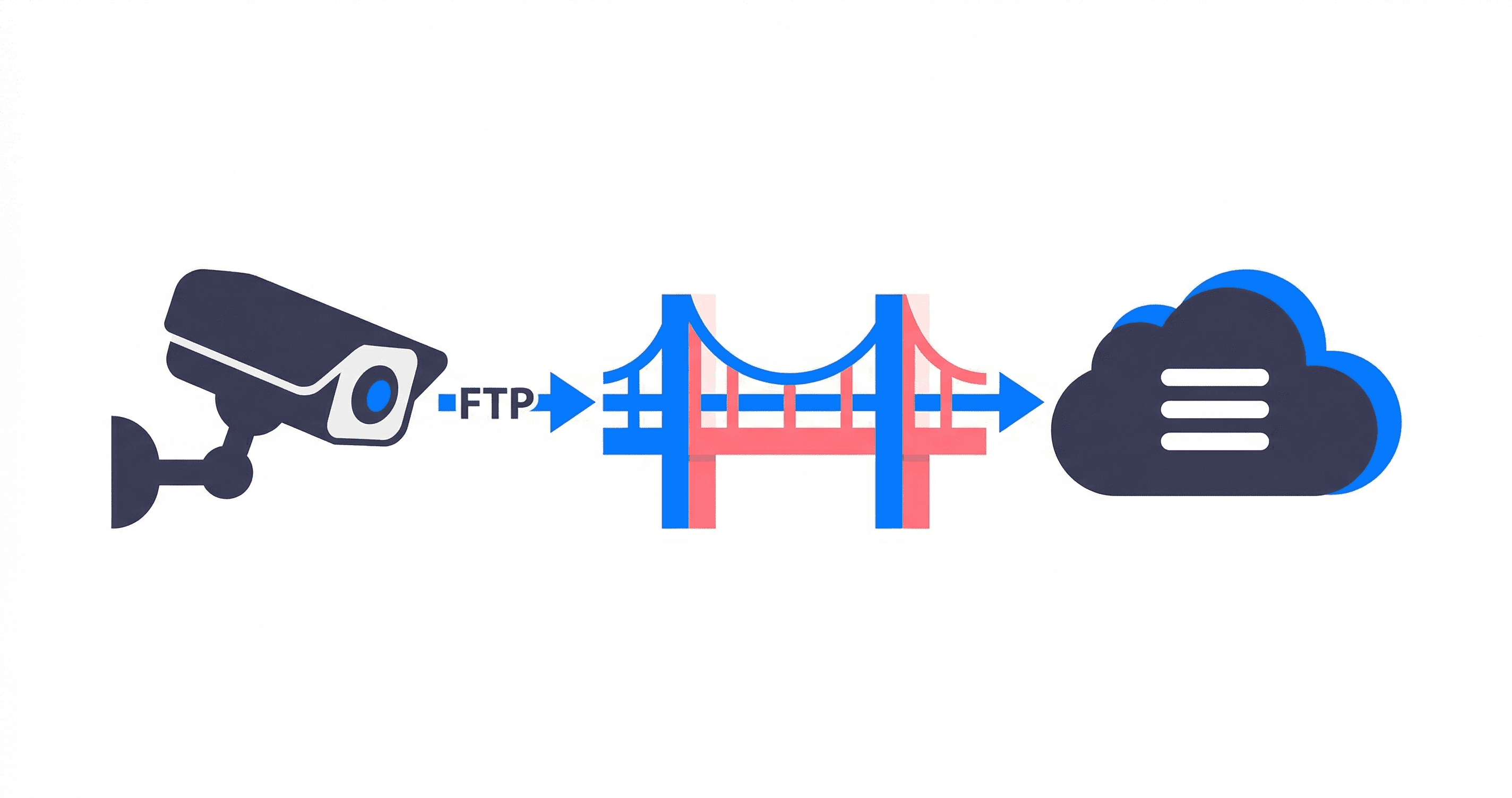

If your systems are entirely cloud-native, sure, S3 ObjectCreated events work fine. But the second you introduce an external vendor using an SFTP client or a legacy FTP camera, native S3 notifications become a nightmare.

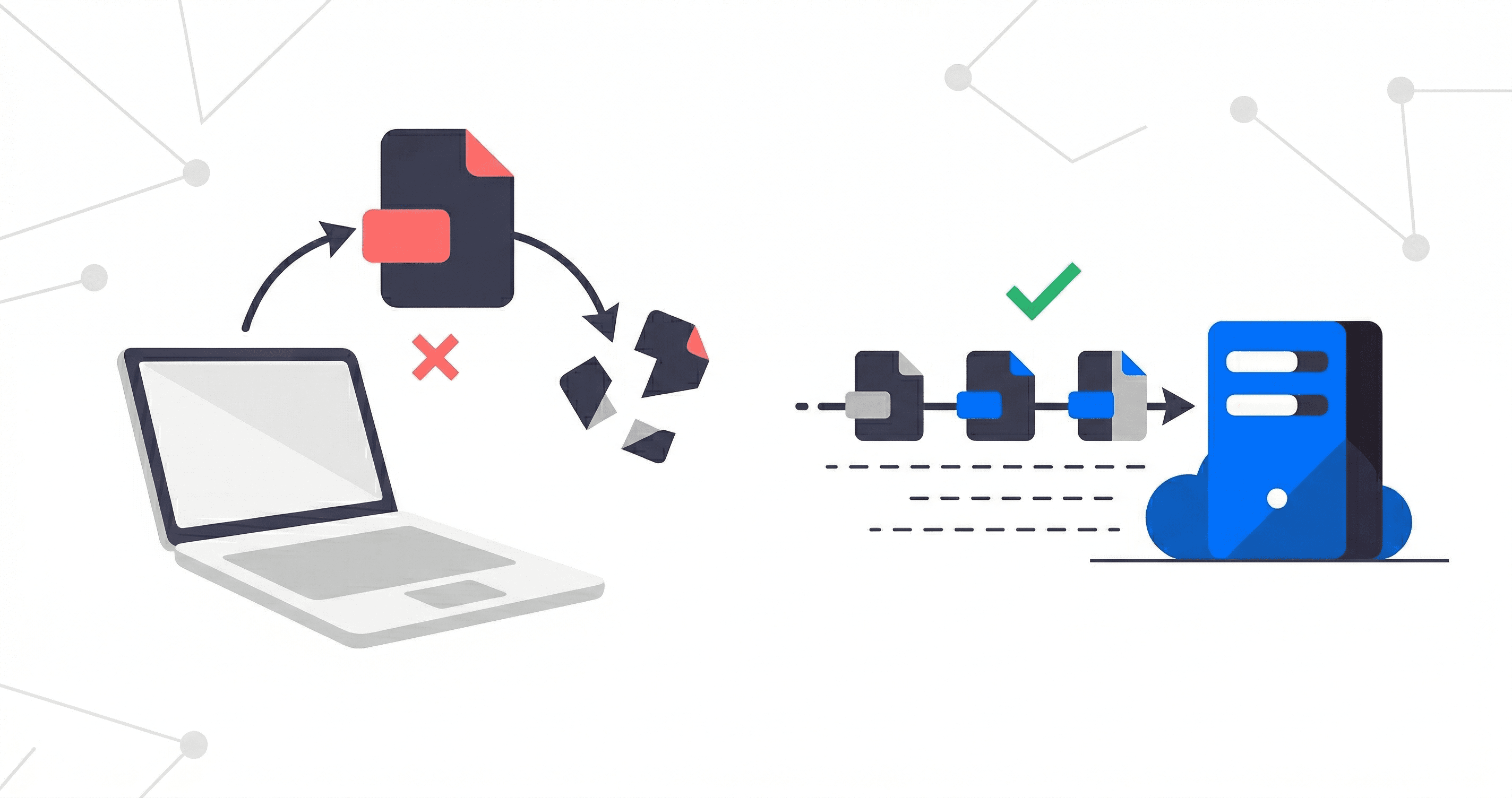

Why? S3 itself doesn't "go blind," but FTP and SFTP protocols are notoriously messy. They often upload a massive file as invoice.pdf.tmp, drop the connection halfway, resume, finish, and finally issue a rename command (which S3 translates into a COPY and DELETE).

If you hook a Lambda or Zapier trigger directly to raw S3 events, you will inevitably trigger downstream ETL jobs on half-baked .filepart chunks, or process the same file three times. S3 also captures zero protocol context: it has no idea who the SFTP user was or if the transfer crashed midway.

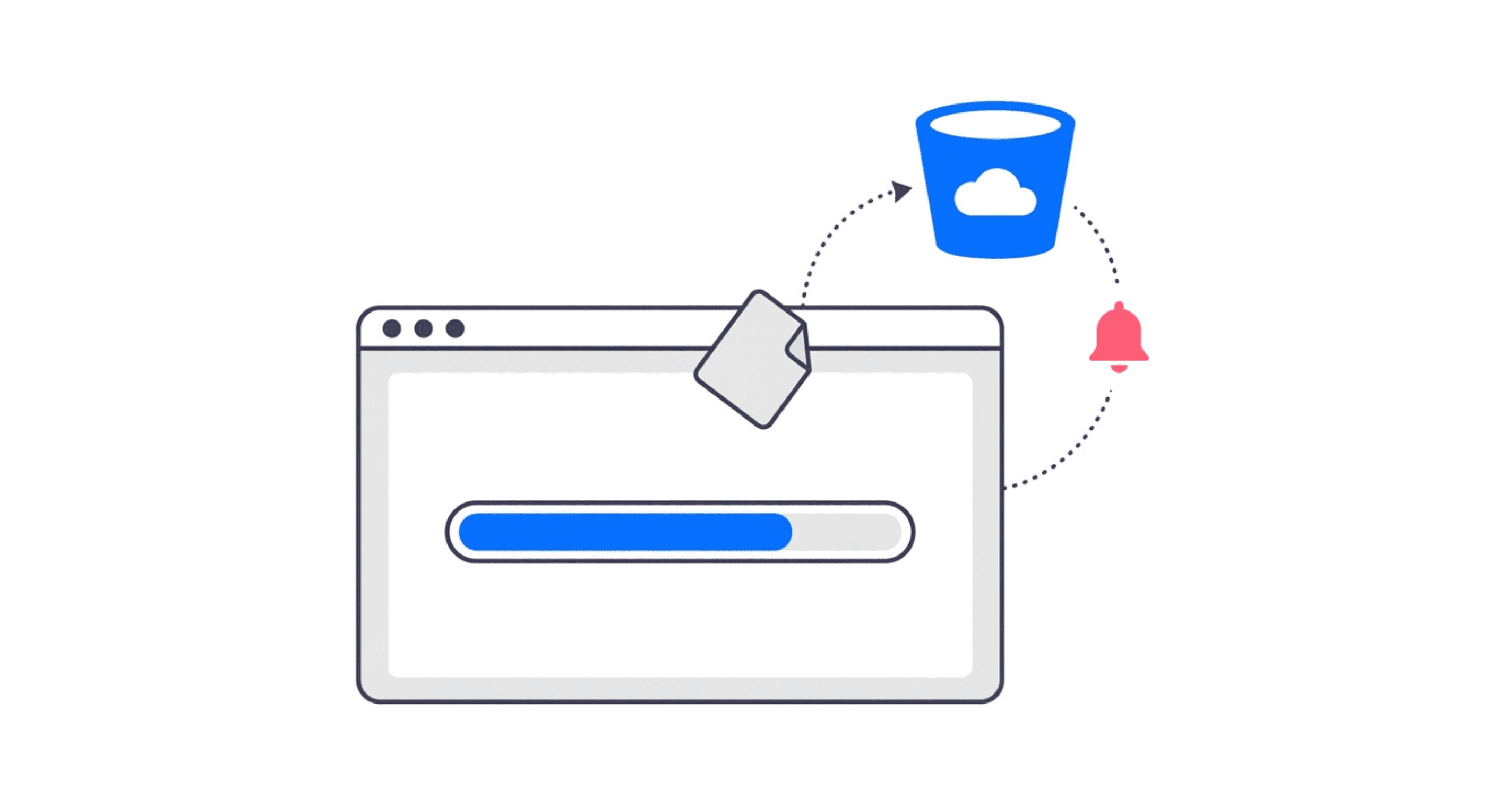

Here's the workaround we actually rely on: HMAC-signed webhooks fired the literal millisecond a file lands. No polling. Zero lag. Just a clean HTTP POST the moment a transfer wraps up.

Here's what hits your endpoint:

{

"event": "file.status_changed",

"timestamp": "2026-01-15T14:32:00.000Z",

"data": {

"pipe_id": "pip_123456789",

"file_id": "fil_987654321",

"filename": "invoice_march.pdf",

"status": "transferred",

"size": 2097152,

"sender": "accounting_team",

"protocol": "sftp",

"dataStores": [

{ "dataStoreId": "dst_111222333", "status": "transferred" }

]

}

}

Every request ships with an X-Rilavek-Signature header. It’s a hex-encoded HMAC-SHA256 hash of the raw body. Verify this before you trust the payload. I can't stress this enough. Writing one line of crypto validation guards you against spoofed requests blindly kicking off database writes.

Verifying the Signature First

Don't skip this step. It takes thirty seconds to implement, but it prevents some bored scraper from artificially triggering your expensive ETL pipelines.

The massive gotcha? Do not pass req.body (the parsed object) into .update(). Pass the raw buffer. The hash validates against the exact bytes transmitted on the wire. If your middleware parses the JSON before you grab the payload, the byte signature changes and every single request will fail validation. If you're running Express, chuck an express.raw({ type: 'application/json' }) on the route. Problem solved.

Pattern 1: Slack Alert on Upload

This is the lowest hanging fruit. Your ops team wants to know when a critical dataset hits the bucket. Please don't write another scheduled script for this. Just wire the webhook straight to a Slack channel.

Make sure to return that 200 immediately. Slack’s API gets jittery sometimes, and you don’t want your webhook listener timing out just because a chat room is struggling to render an emoji. Offload the heavy lifting and bail out fast.

Pattern 2: API Gateway to Lambda ETL

Why write and host intermediate middleware just to proxy a trigger to an AWS Lambda? You shouldn't. The cleanest architecture is to point Rilavek's webhook directly at an AWS API Gateway endpoint (or a Lambda Function URL).

When the SFTP transfer finishes, Rilavek hits your API Gateway. API Gateway invokes your Lambda. Your Lambda validates the payload and starts the ETL job. Zero middleware required.

One architectural warning: API Gateway imposes a strict 29-second timeout. If your ETL job takes three minutes to parse a massive CSV, API Gateway will drop the connection. Rilavek will log a "Webhook Failed" timeout, and you might accidentally double-process on retries.

For heavy ETL pipelines, either configure your API Gateway integration to invoke the Lambda asynchronously (returning a 202 Accepted immediately to Rilavek), or have your synchronous Lambda immediately push a message to an SQS queue and exit, letting a secondary worker Lambda pull from the queue.

Pattern 3: Zapier Fan-Out

Maybe your team doesn't want to maintain custom middleware. That's fair. Zapier handles this routing beautifully using their "Webhooks by Zapier" integration.

- Spin up a new Zap and select Webhooks by Zapier (Catch Hook).

- Copy the obscure URL they give you.

- Paste that URL into your Rilavek webhook settings.

- Push a dummy file through your SFTP connection to capture the payload.

- Build out your fan out logic.

The data.sender property is pure gold here. If different senders map to different vendors, use Zapier’s filter logic to branch the routing. The accounting firm gets a Slack ping. The logistics partner triggers a Salesforce update.

One brutally honest caveat about Zapier: their free tier imposes a miserable 15-minute polling delay on most triggers. Strangely, the Webhook trigger sidesteps that limitation completely, making it one of the few Zapier patterns that genuinely feels real-time.

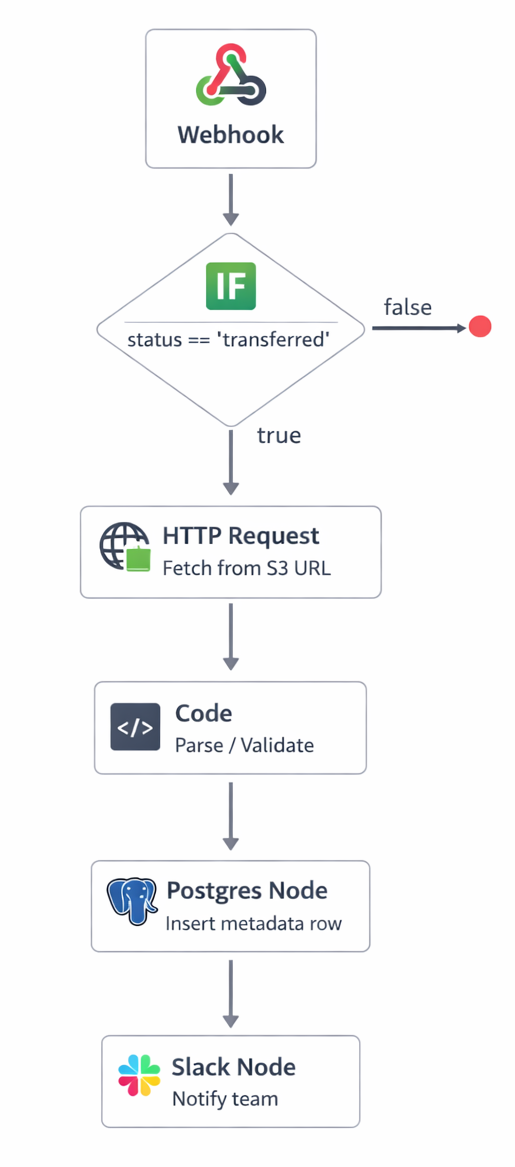

Pattern 4: n8n Self-Hosted Workflow

If you run n8n, the wiring is painless. Drop a Webhook node on the canvas, force the Method to POST, and copy the Production URL. Do not use the Test URL. We’ve seen developers leave the Test URL active, which only works while the n8n editor tab remains open. It breaks the minute they close their laptop.

Here's exactly how we structure this:

The huge advantage n8n holds over Zapier here? The Code node. You can run raw JavaScript to inspect data.filename, regex match it, branch based on the sender ID, or dynamically build an S3 presigned URL on the fly.

Also, pay attention to file_id. Store that string in Postgres before processing. If a network hiccup causes a webhook retry, your database will catch the duplicate file_id and prevent you from double-processing a massive dataset.

The Status Field

Read this part carefully. The webhook triggers on every status change. That includes miserable failures. The status property can be transferred or failed. Do not blindly assume receiving a payload means the file is sitting pretty in your bucket.

Always gate your downstream logic:

A failed transfer from an external client is actually highly valuable context. You probably want a dedicated Slack channel piping in failures so your support crew knows exactly when to reach out. But firing off a "hey, your processed data is ready" email when the underlying upload crashed? That’s infinitely worse than staying silent.

Rilavek fires these events natively on every single pipe. You can finally step away from managing S3 polling infrastructure, and get instant upload triggers for basically any SFTP, FTPS, or raw FTP source touching your buckets.

Technical Reference & Next Steps

- Webhook Payload Specification: For a full list of event types and data fields, see the Rilavek Webhooks Documentation.

- Signature Verification: Ensure you implement HMAC-SHA256 verification to secure your ingestion endpoints.

- Sync Event Lifecycle: Learn more about how Rilavek tracks every file transfer in our Core Concepts guide.

Enjoyed this guide?

Share it with your network to help others scale their data pipelines.